Europe's AI Compass

Xime Amado ·

The European Compass

A guide to understanding how Europe classifies the risks of artificial intelligence — and what is at stake.

Artificial intelligence is no longer a laboratory promise. It is in the search engine that summarizes answers before you finish typing the question, in the bank that detects fraud in milliseconds, in the hospital that analyzes medical images, in the school that personalizes exercises, and in the company that filters résumés before a human sees them. The question is no longer whether we will live with AI. The question is what map we will use.

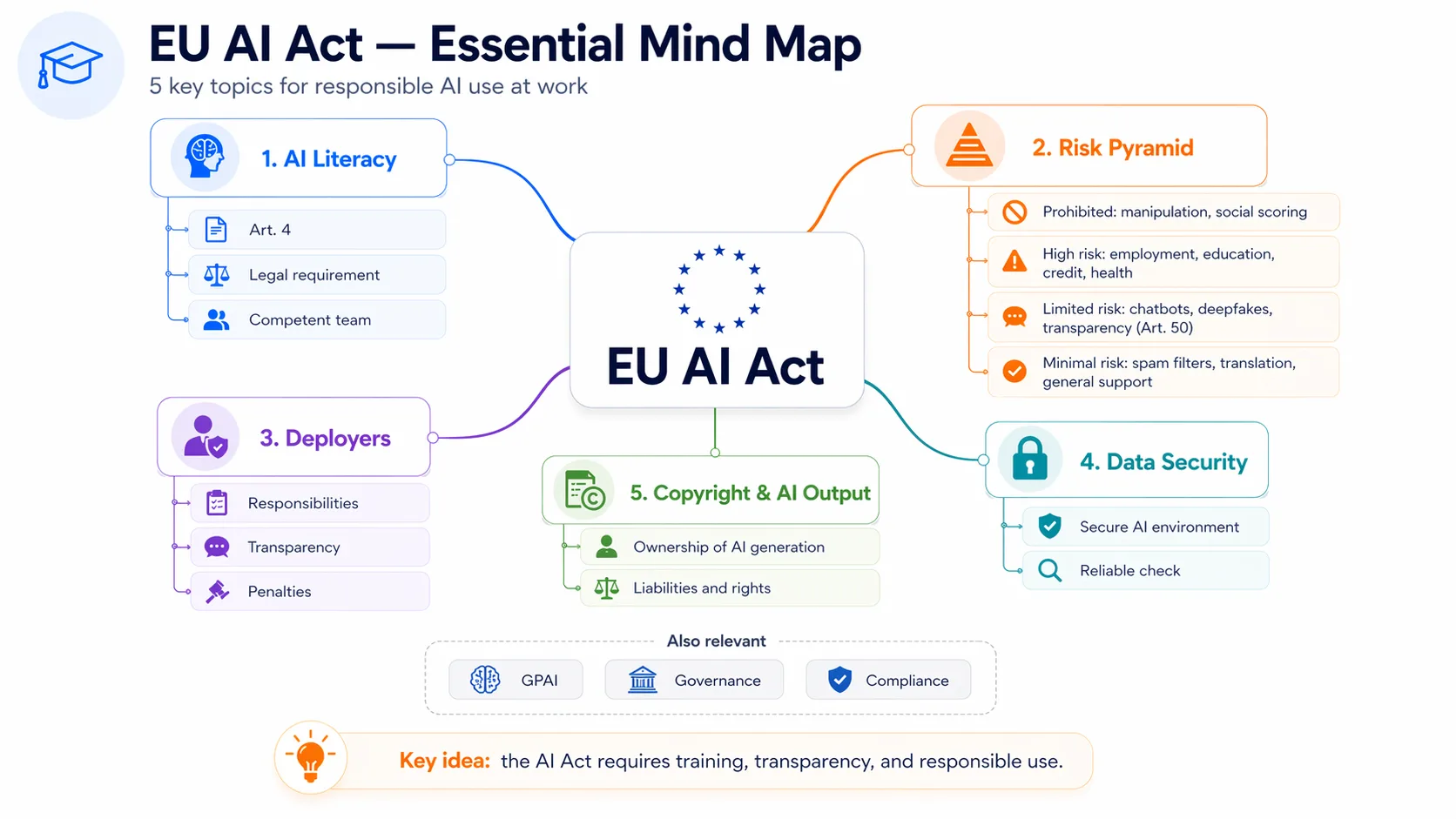

In Europe, that map is called the AI Act. The law entered into force on August 1, 2024, and will continue to apply in phases through 2026 and 2027, with some obligations already active, such as prohibited practices, AI literacy, and rules for general-purpose AI models. [^1] It starts from a simple premise: not all uses of AI carry the same weight.

A classification of consequences, not technology

A music-recommendation app is not the same as a system that can influence a diagnosis, a loan, a school placement, or a hiring decision. That is why the EU proposes classifying AI not only by what it is, but by what it can do to someone.

The European Commission describes its approach as a combination of excellence and trust: promoting research, industrial capacity, and innovation, while also protecting safety, fundamental rights, and oversight. [^2] In practice, that approach becomes a risk scale.

At the base are minimal-risk uses: recommendations, spam filters, video games, and everyday low-impact tools. These are not the central focus of regulation.

One level above is limited risk. The key here is transparency: if someone is speaking with a chatbot, they should know they are not speaking with a human. If a piece of content was generated by AI, that should be identifiable. The rule does not say stop; it says disclose.

The critical level is high risk. This includes systems used in healthcare, employment, education, justice, security, or essential services: areas where an error does not merely inconvenience someone. It can close a door. In these cases, the AI Act requires risk management, quality data, technical documentation, security controls, and human oversight. [^3]

At the top are unacceptable-risk uses: practices considered especially harmful because they can violate fundamental rights. These include social scoring and certain uses of biometric identification or emotion recognition in sensitive contexts. [^4]

The rule of thumb is simple: the greater the impact of a tool on a person, the stricter the control it must meet.

The tension nobody wants to name

The most interesting part of the AI Act is not only in its definitions. It is in the contradiction it tries to hold together.

Europe wants to regulate. But it also wants to compete. And that second part is often lost in the conversation.

While the United States, China, and much of the global market push AI at the speed of platforms, venture capital, and talent concentration, the EU is trying to build a more orderly path. That bet has an obvious virtue: it protects. It also carries a real risk: if the rules become too heavy or too slow, Europe may end up watching others turn the technology into business, infrastructure, and geopolitical power.

That is why the European approach does not end with the law. The Commission also talks about public investment, talent development, access to data, supercomputing, AI Factories, and direct support for startups and SMEs. The AI Continent Action Plan aims to make Europe more competitive in AI and to bring the technology into sectors such as healthcare, education, industry, and environmental sustainability. [^2] AI Factories are designed to provide access to computing infrastructure, talent, and innovation services, with priority for startups and SMEs. [^5]

The bet is that the technology should not remain trapped in official documents or laboratories, but reach hospitals, industries, schools, public administrations, and small businesses. The stated objective is not to slow the race, but to run it with a more legible board.

Whether that is possible — regulating without slowing down, protecting without excluding — is the question the AI Act itself will have to answer in the coming years. The law exists. Implementation will be the test.

What changes for people

For any citizen, what matters is not the technical language of regulation. It is the daily consequence.

If AI helps recommend a movie, the margin of error is small and the cost of getting it wrong is low. If it helps decide who receives a job opportunity, a credit approval, or a medical diagnosis, the story changes completely. At that point, it is no longer enough to say “the algorithm decided.” There must be an explanation, the possibility of review, and, when appropriate, accountability.

AI does not have a dark side of its own. It does not wake up with intentions. The problems appear elsewhere: in poorly chosen data, in systems presented as infallible, in organizations that automate without review, in governments that deploy technology without checks and balances, and in users who turn it into a tool for manipulation. Artificial intelligence does not come with built-in morality. It amplifies the morality of those who design it, buy it, and apply it.

A sign on a road already full of traffic

That may be the best way to read the AI Act: it is not an ethical medal or a brake on the future. It is an attempt to put signs on a road that is already full of traffic and that nobody built from a blueprint.

AI will keep moving forward, with or without Europe. The question is whether it will move as a black box — opaque, unauditable, nameless — or as a tool clear enough that, when it affects someone’s life, human responsibility does not disappear behind the machine.

The European compass points in that direction: useful, competitive AI that is transparent enough for errors to be found, corrected, and attributed.

Learn and get certified

If this topic interests you and you want to understand how to apply these rules in companies, platforms, digital products, or AI projects, you can take the course and get certified at www.actactact.com.

AI is already changing the digital world. Understanding its rules is becoming a professional advantage.

Sources

[^1]: European Commission, “AI Act”.

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

[^2]: European Commission, “European approach to artificial intelligence”.

https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence

[^3]: European Commission, “Navigating the AI Act”.

https://digital-strategy.ec.europa.eu/en/faqs/navigating-ai-act

[^4]: European Commission, “Guidelines on prohibited artificial intelligence practices, as defined by the AI Act”.

https://digital-strategy.ec.europa.eu/en/library/commission-publishes-guidelines-prohibited-artificial-intelligence-ai-practices-defined-ai-act

[^5]: European Commission, “AI Factories”.

https://digital-strategy.ec.europa.eu/en/policies/ai-factories